Is Performance Funding in Higher Education Effective? Too Early to Call

In response to stagnant graduation rates and climbing tuition prices, many state leaders have adopted performance funding systems for their public universities and community colleges. Our previous blog described how performance funding attempts to improve student outcomes by tying state funding to a prescribed set of metrics, such as the number of students who graduate.

Today, 25 states operate some form of performance funding for higher education. But how effective have these systems been in improving student outcomes. Though it may seem like a simple question, the answer is clouded by a lack of evaluation, competing state initiatives, and the fact that no two systems have the same design.

Newer performance funding models have yet to establish a track record

Performance funding has evolved significantly since Tennessee adopted the first model in 1979. The early performance funding systems, now called Performance Funding 1.0 (PF 1.0), tended to be lower-stakes and less-sophisticated compared to newer models. In general, early versions rewarded an institution with a “bonus” on top of the state’s base funding if it performed well within the prescribed set of metrics, such as increasing the number of graduates. These bonuses tended to be relatively small, at around 1 to 5 percent of a state’s base funding, leading to claims that the carrot was insufficient to influence institutional behavior. Indeed, most analyses have found that PF 1.0 has no statistically significant effect on student outcomes.

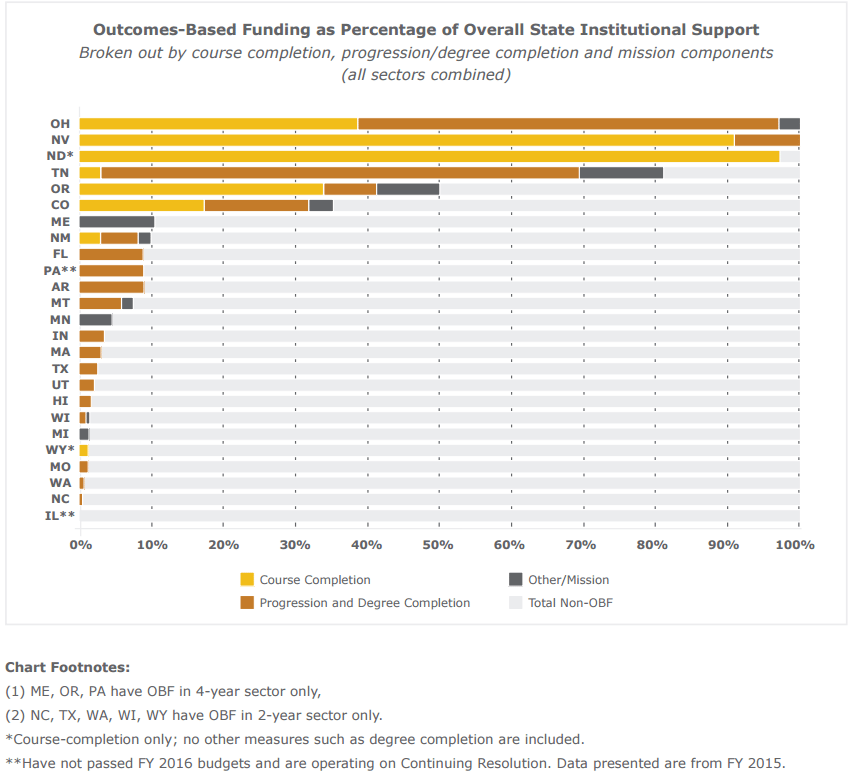

The past several years have seen the emergence of Performance Funding 2.0 (PF 2.0). These systems raise the stakes by integrating performance metrics into a state’s base funding formula (rather than as an additional carrot), meaning that state base funding for universities is dependent on student performance. Furthermore, PF 2.0 systems tend to allocate higher amounts of funding on a performance basis. Some states tie close to 100 percent of base funding to performance.

Performance Funding 2.0 may be effective in improving student outcomes but several more years of study is needed.

Although the effectiveness of PF 2.0 systems has not been sufficiently studied, the existing evidence points to more promising results than the PF 1.0 version. For example, one study found that performance funding led to an increase in the number of associate’s degrees and certificates granted by two-year colleges. Research also indicates that performance funding can cause institutions to: increase spending on student services and instruction; change curricula or instructional delivery; and improve counseling, academic advising, and retention services. However, some remain skeptical that this approach will have any effect. Reports have also emerged of unintended consequences that are either occurring or could potentially occur as a result of PF 2.0, such as, weakening academic standards and increasing restrictions on student admissions.

Unfortunately, several more years may be needed before researchers can accurately gauge the effects of PF 2.0 systems on student outcomes. A recent study found that performance funding can take up to seven years before producing results, as institutions require time to effectively adapt.

Performance funding systems are diverse, and do not operate in a vacuum

Performance funding is far from the only variable that can affect student outcomes. Student success is often determined by a host of socio-economic, institutional and individual factors. In addition, many state governments have programs in place designed to help students, making it difficult to tease out the effects of performance funding. For instance, the state of Tennessee recently implemented “Tennessee Promise,” a program which offers free community college and is drastically changing the demographics of the state’s college students. Tennessee may now struggle to differentiate any improvements when attempting to assess these policies independently. Furthermore, different state systems have different levels of institutional buy-in, a factor that can be difficult to quantify but one that can greatly impact the effectiveness of any performance funding system.

Source: HCM Strategies, Driving Better Outcomes: Fiscal Year 2016 State Status & Typology Update, 2016.

To make things more complicated, no two performance funding systems are identical. In fact, most are fundamentally different, a reflection of varying state values and priorities. Indiana, for example, ties less than 10 percent of funding to an institution’s performance, while Ohio and Tennessee set funding nearly entirely on performance metrics. Some states tie performance only to the number of graduates, whereas others track credit completion or even labor force outcomes. The fact that there is no one-size-fits-all approach to performance funding hampers any effort to generalize about the effect it has on student outcomes. The variation also limits the information that can be derived from broadly comparing student outcomes in states with PF systems versus states without.

No two performance funding systems are identical making it difficult to assess overall effectiveness.

In sum, external factors combined with the diversity of performance funding systems set a complicated stage to analyze the effectiveness of these models. Nonetheless, finding more concrete evidence may simply take time. As PF 2.0 systems take hold and additional data is collected, we should see a clearer picture of what is and is not working. In the meantime, policymakers should continue to innovate, while remaining vigilant to prevent harmful unintended consequences that may arise. Ultimately, if PF 2.0 systems are proven to be successful, state policy could begin to coalesce more firmly around a set of evidence-based best-practices.

Share

Read Next

Support Research Like This

With your support, BPC can continue to fund important research like this by combining the best ideas from both parties to promote health, security, and opportunity for all Americans.

Give NowRelated Articles

Join Our Mailing List

BPC drives principled and politically viable policy solutions through the power of rigorous analysis, painstaking negotiation, and aggressive advocacy.